Multilingual AI Training and Evaluation Services

Improve AI Performance Across Languages With Expert Human Evaluation

Stepes helps enterprises develop, test, and improve multilingual AI systems through structured data collection, annotation, and human review across 100+ languages.

Supporting global AI deployments across healthcare, financial services, customer support, and other enterprise environments where multilingual accuracy, consistency, and human review directly impact quality and trust.

Improving AI Performance Across Languages

AI systems are only as effective as the data, training, and evaluation processes behind them. As organizations deploy AI globally, maintaining consistent performance across languages, regions, and real-world user interactions becomes increasingly complex.

Stepes provides multilingual AI training and evaluation services to help enterprises improve AI accuracy, safety, and usability across global markets. Our services include multilingual AI data collection, linguistic annotation, and human evaluation designed to support model training, testing, and continuous optimization.

Unlike traditional AI data providers, Stepes focuses on real-world AI performance. We combine professional native linguists with structured human-in-the-loop workflows to evaluate AI outputs for linguistic accuracy, terminology consistency, cultural relevance, and compliance requirements.

This approach enables organizations to build, validate, and refine AI systems that perform reliably across languages, whether for large language models (LLMs), chatbots, voice assistants, or enterprise AI applications.

What Are Multilingual AI Training and Evaluation Services?

Multilingual AI training and evaluation services support the development, testing, and optimization of AI systems across languages by combining high-quality data, linguistic expertise, and structured human evaluation.

These services typically include:

- High-quality multilingual AI training data collection across diverse languages, dialects, and user scenarios

- Linguistic annotation and labeling for tasks such as intent classification, named entity recognition (NER), sentiment analysis, and instruction tuning

- Human evaluation of AI outputs to assess accuracy, fluency, terminology, and cultural appropriateness

- Functional and contextual testing across languages to validate real-world AI performance

- Ongoing performance validation, benchmarking, and continuous improvement cycles

Unlike basic AI data services, multilingual training and evaluation focus on how AI systems perform in real-world environments. This includes assessing consistency across languages, identifying gaps in model behavior, and refining outputs through human-in-the-loop review.

By combining multilingual data collection, annotation, and evaluation, these services help organizations improve the reliability, safety, and usability of AI systems across global markets. This is especially critical for large language models (LLMs), chatbots, voice assistants, and enterprise AI applications where language quality directly impacts user experience and trust.

Core Services

Stepes provides a comprehensive set of multilingual AI training and evaluation services designed to improve AI performance across languages. These services support the full AI lifecycle, from data collection and annotation to human evaluation and continuous optimization.

Human evaluation of AI-generated content across languages to assess real-world performance, including:

- Accuracy and meaning preservation

- Fluency, readability, and natural language flow

- Terminology consistency across domains and languages

- Cultural relevance and localization quality

- Safety, compliance, and policy alignment

This service is critical for large language models (LLMs), chatbots, and generative AI systems where output quality directly impacts user experience, trust, and regulatory compliance. Human-in-the-loop review plays a central role in multilingual AI output review services, helping identify errors, inconsistencies, and edge cases that automated systems may miss.

Collection of high-quality multilingual voice and conversational datasets to support speech and conversational AI training, including:

- Natural dialogues and scripted prompts

- Accent, dialect, and regional variation coverage

- Domain-specific conversations (healthcare, finance, customer support, etc.)

- Multi-turn and context-aware interactions

These datasets are used to train and improve voice assistants, speech recognition systems, and conversational AI applications. High-quality multilingual voice and conversation data collection is essential for building AI systems that can understand and respond naturally across languages and user contexts.

Linguistic annotation and labeling services to support supervised learning, model training, and fine-tuning:

- Intent classification and intent mapping

- Named entity recognition (NER)

- Sentiment, tone, and emotion labeling

- Content categorization and moderation labeling

- Instruction tuning and prompt-response datasets

All annotation is performed by professional native linguists with domain expertise, ensuring high data quality, consistency, and alignment with real-world language use. Multilingual text annotation services help improve model understanding and enable more accurate and reliable AI behavior across languages.

Creation and refinement of multilingual training datasets for chatbots and virtual assistants:

- Prompt-response pair generation

- Dialogue design and conversation flow development

- Multilingual intent mapping and localization

- Adaptation of conversational tone and user experience across languages

These services help AI systems better understand user intent, manage multi-turn conversations, and deliver more natural and contextually appropriate responses. Conversational AI training data services are essential for improving user engagement and experience in multilingual environments.

Systematic evaluation of large language models across languages, domains, and user scenarios:

- Prompt testing and structured response scoring

- Hallucination detection and factual accuracy validation

- Instruction-following and task completion evaluation

- Cross-language consistency and output comparison

- Benchmarking across models, datasets, and versions

Multilingual LLM evaluation services enable organizations to validate model performance before deployment and continuously improve AI quality through structured evaluation and feedback loops. This ensures more consistent, reliable, and trustworthy AI outputs across global markets.

Real-World Use Cases

Stepes supports a wide range of multilingual AI applications across industries, helping organizations improve AI performance, accuracy, and user experience in real-world environments.

Evaluate chatbot and virtual assistant responses across languages to ensure consistent tone, accuracy, and customer experience. Multilingual AI evaluation helps identify gaps in intent recognition, response quality, and localization, enabling more natural and effective user interactions across global markets.

Train and test speech recognition and voice-enabled AI systems using diverse accents, dialects, and real conversational patterns. Multilingual voice data collection and evaluation improve speech accuracy, intent understanding, and user experience across regions and languages.

Assess multilingual performance of large language models by testing prompts, scoring responses, and identifying inconsistencies across languages. Structured human evaluation helps reduce hallucinations, improve factual accuracy, and refine model behavior for global deployment.

Label and evaluate multilingual content to improve classification accuracy, safety detection, and policy enforcement. Multilingual annotation and evaluation ensure AI systems can correctly identify harmful, sensitive, or non-compliant content across different languages and cultural contexts.

Validate AI outputs in regulated and high-risk environments such as healthcare, financial services, and legal applications. Multilingual evaluation and linguistic QA help ensure accuracy, terminology consistency, and compliance with industry-specific requirements across global markets.

Why Stepes

Stepes delivers multilingual AI training and evaluation services with a strong focus on language quality, real-world performance, and enterprise reliability. Unlike traditional AI data providers, we combine global linguistic expertise with structured human evaluation to help organizations improve how AI systems perform across languages.

Access professional native linguists across 100+ languages with deep domain knowledge in areas such as healthcare, financial services, legal, and enterprise applications. This ensures that multilingual AI data, annotation, and evaluation reflect real-world language use, not just literal translations or generic labeling.

Our workflows integrate AI efficiency with expert human review to deliver consistent and reliable results. Human-in-the-loop evaluation helps identify nuanced linguistic issues, cultural context gaps, and edge cases that automated processes alone cannot capture, resulting in higher-quality AI outputs.

Stepes is built on decades of experience in professional translation, linguistic QA, and terminology management. This foundation enables us to deliver multilingual AI services with a level of consistency, accuracy, and linguistic control that goes beyond traditional annotation and data labeling providers.

We focus on how AI systems perform in real user environments across languages. Instead of simply generating data, we evaluate outputs for accuracy, fluency, cultural relevance, and usability, helping organizations improve AI performance where it matters most.

Our services are supported by secure infrastructure, controlled workflows, and audit-ready processes designed for enterprise use. We support compliance requirements, data security standards, and scalable project management across global teams and languages.

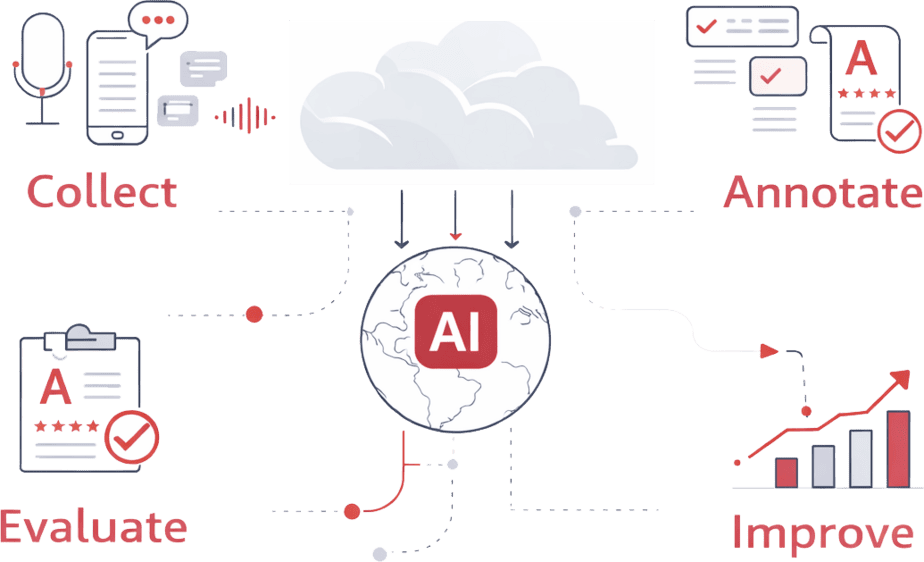

Where Stepes Fits in the AI Lifecycle

Stepes supports multilingual AI development across key stages, helping organizations improve performance, accuracy, and consistency throughout the AI lifecycle.

Multilingual data collection, voice datasets, and linguistic annotation to support diverse and representative training inputs.

Structured datasets for supervised learning, fine-tuning, and instruction tuning across languages.

Human evaluation and linguistic validation to assess accuracy, fluency, and cross-language consistency.

Cross-language QA and performance verification to ensure AI systems are ready for real-world use.

Ongoing evaluation, feedback loops, and model refinement to improve performance over time.

Frequently Asked Questions

These services support the development and optimization of AI systems across languages through data collection, annotation, and human evaluation. The goal is to improve accuracy, consistency, and usability in real-world multilingual environments.

AI data services focus on collecting and labeling data, while AI evaluation focuses on how models perform in real-world scenarios. Evaluation includes human review of outputs, quality scoring, and identifying issues such as inaccuracies, inconsistencies, and hallucinations.

Human evaluation helps identify linguistic nuances, cultural context, and edge cases that automated systems may miss. This is especially important for multilingual AI, where language quality and meaning can vary significantly across regions.

Yes. Stepes provides multilingual LLM evaluation services, including prompt testing, response scoring, hallucination detection, and cross-language consistency analysis to improve model performance across markets.

We support multilingual AI training and evaluation across 100+ languages, leveraging professional native linguists with domain expertise to ensure accuracy and consistency.

We use structured workflows that combine linguistic expertise, terminology management, and human-in-the-loop QA processes. This ensures consistent output quality across languages, domains, and use cases.

Yes. We support enterprise and regulated environments by applying domain expertise, terminology control, and structured QA processes to meet industry-specific requirements.

Improve Multilingual AI Performance Across Global Markets

Deliver more accurate, consistent, and reliable AI experiences across languages with expert training data, evaluation, and human-in-the-loop review.